The problem with using GIT option in ADF is thatġ- You can not cherry pick your pipeline deployment, For example if you have 10 Pipeline in a ADF and you just want to deploy one of them you can'tĢ- changing a CONNECTION object is not easy and in some cases not possible, for example a developer develops a ADF with 10 pipelines, the connection object are NOT using Azure KeyVault (AKV) and you want to change the connection objects to use AKV I add a new description to my pipeline with Email notifications:

Knowing, that all my ADF objects are now stored in GitHub, let's see if a code change from Azure Data Factory will be synchronized there.

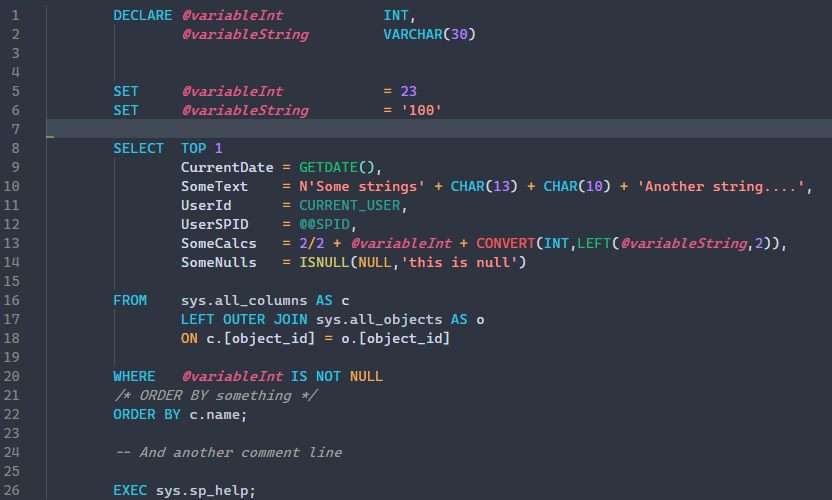

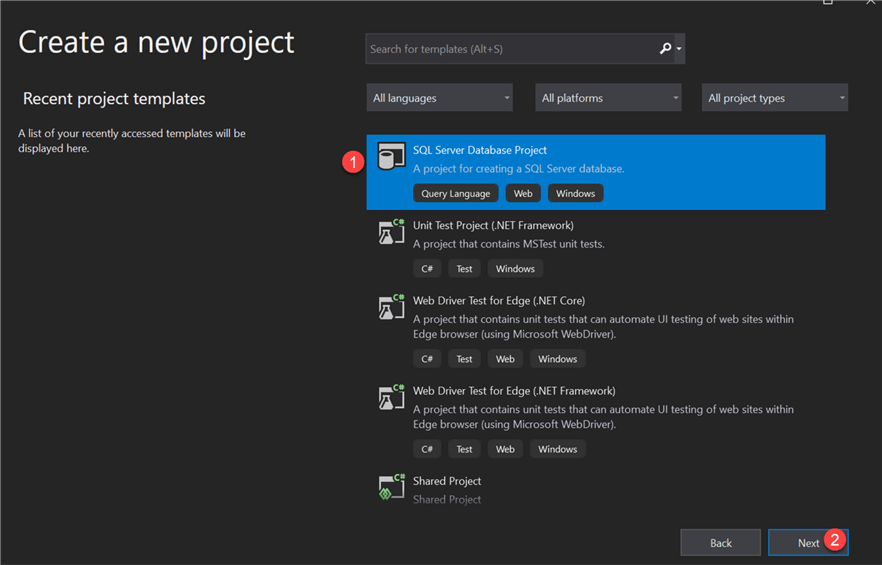

Step 3: Testing your further changes in ADF pipelines Source code integration allowed me to save all my AFD artifacts: pipelines, datasets, linked services, and triggers.Īnd that's where I can see all my four ADF pipelines: You can validate them all in the GitHub itself. Let me show you how I did this using my personal GitHub account you can do this with enterprise GitHub accounts as well.Ī) Open your existing Azure Data Factory and select the " Set up Code Repository" option from the top left "Data Factory" menu:ī) then choose " GitHub" as your Repository Type:Ĭ) and make sure you authenticate your GitHub repository with the Azure Data Factory itself:Īfter selecting an appropriate GitHub code repository for your ADF artifacts and pressing Save button: Has a corresponding pipeline created in my Azure Data Factory:Īnd all of them are now publically available in this GitHub repository: So now is the day to put all my ADF pipeline samples to my personal GitHub repository.ġ) Setting Variables in Azure Data Factory PipelinesĢ) Append Variable activity in Azure Data Factory: Story of combining things togetherģ) System Variables in Azure Data Factory: Your Everyday ToolboxĤ) Email Notifications in Azure Data Factory: Failure is not an option Which was a great improvement from a team development perspective. This hasn't been the best practice from my side, and I needed to start using a source control tool to preserve and version my development code.īack in August of 2018, Microsoft introduced GitHub integration for Azure Data Factory objects. all changes had to be published in order to be saved. My ADF pipelines is a cloud version of previously used ETL projects in SQL Server SSIS.Īnd prior to this point, all my sample ADF pipelines were developed in so-called "Live Data Factory Mode" using my personal workspace, i.e. (2019-Feb-06) Working with Azure Data Factory (ADF) enables me to build and monitor my Extract Transform Load (ETL) workflows in Azure.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed